Like most people, I’ve grown up with computers. I’m 52 and I’ve been messing around with computers since I was 12, in 1982.

Coding is cozy

I’ve always associated learning how to code with the cold, grey winter days near the solstice. There’s something about wanting to be inside when it’s blustery out, concentrating on a task. I think it’s the same reason people like to do jigsaw puzzles, crossword puzzles, build models, do knitting, crafting, bake cookies, or quiet board games. And maybe it’s not an accident that a lot of people take part in the Advent of Code this time of year. Coding is cozy.

Apollo, PA

My mom was a math teacher at a small Catholic elementary school in a town with a weird, retro-future connection: Apollo, Pennsylvania. Apollo was laid out in 1790 and renamed Apollo in 1848. It is one of the few—maybe the only— city/state palindromes. And although a tiny village the town, also had a moon landing festival that’s been running almost uninterrupted since 1969 to celebrate the original Apollo moon landing. To be clear, the town has nothing in connection with the NASA mission, other than the name “Apollo”. Apollo also had a nuclear facility subcontracted by Westinghouse to produce nuclear fuel. The plant had a terrible safety record and was at the center of a missing uranium-235 scandal, which gave the town a slightly creepy, cold-war vibe.

Our first computer

The school got a small grant to purchase a single TRS-80 model 1 in 1982 when I was in 7th grade. They later purchased a TRS 80 model III. None of the teachers knew anything about how to use this. And they were not interested. So my mom brought it home for a few weeks over the Christmas break in 1982, and we kept it for a little while while she learned how to use it. Of course, I want to learn how to use it too.

There was no hard drive. There wasn’t even a “floppy” drive. There was no connection to the internet (but of course you wanted the acoustic phone modem), and no preinstalled, software or apps. Data was stored on a cassette tape. If you wanted to run something, you often found the code printed out in a magazine or a book. The Model I guide book came with the code to produce several simple games and programs. And that’s how I learn to code. During winter break typing out TRS-BASIC Level 1.

I don’t think I have a picture of me with the old Model I, but here I am with the model III a year later (it stored programs on a floppy disk, which I’m holding). I know this is during the winter time also because I’m still in my Pittsburgh Steelers pyjamas that I got for Christmas.

Coding is poetry

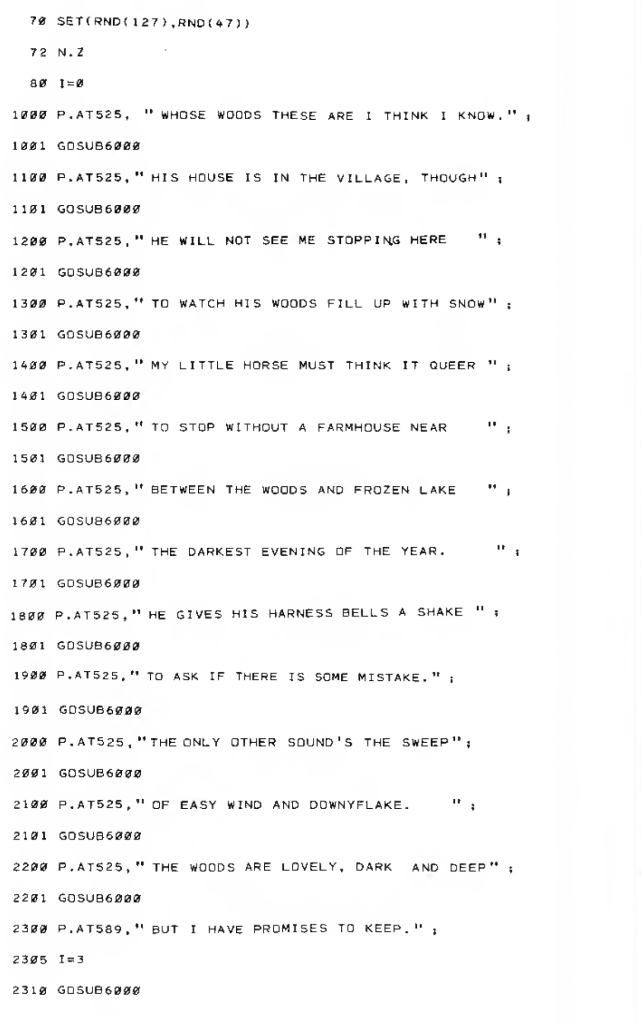

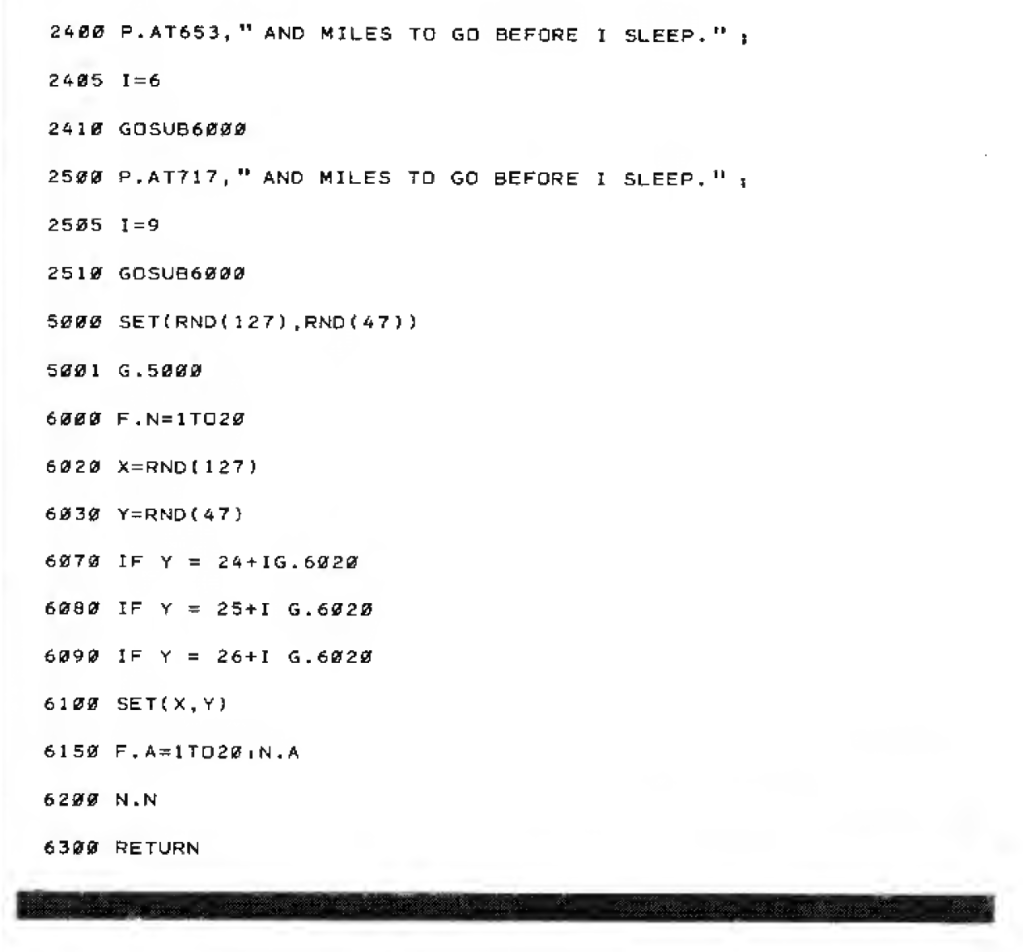

One of my favourite programs of all time was “Stopping by the Woods”, which was printed in the original TRS-80 I manual. It reproduced the poem by Robert Frost line-by-line on the screen. At the same time, it used a simple randomization subroutine to place pixel blocks on the screen that looked like snow. It was monochrome. A black and white monitor with only white pixels. So it worked really well. I remember doing this during the winter time and being struck by how this by the way, they brought together poetry, chance, and coding. I think that had a big influence on me.

Each line of the poem is listed separately, and after each line, a command sends things to a subroutine (GOTOSUB6000) to generate random snow, which then sends it back to where it came from (RETURN). Open the screenshot below to see.

If you have a few quiet days over the next few weeks, I hope you have a chance to do some coding, create and solve some new puzzles, read some winter poetry, or just find the time to reflect on the things that give you peace.